Insight Blog

Agility’s perspectives on transforming the employee's experience throughout remote transformation using connected enterprise tools.

20 minutes reading time

(4068 words)

Enterprise AI Is Failing for One Reason Nobody Talks About - Safe AI At Work

Many enterprise AI projects fail not because of the technology, but because of uncontrolled prompt chaos. Learn why prompt governance, structure, and workflows are critical for successful AI adoption.

Why do so many enterprise AI projects struggle to deliver real results, even after companies invest heavily in the latest tools and models?

The problem often isn't the technology. In many organizations, the real issue is something much simpler: prompt chaos.

Employees across departments are experimenting with AI every day.

Prompts are copied between Slack messages, Google Docs, emails, and personal notes.

Over time, this creates a fragmented environment where no one knows which prompts actually work, which versions are correct, or how certain AI outputs were generated.

80%

of enterprises

According to Gartner, by 2027 more than 80% of enterprises are expected to implement formal AI governance policies to control risk, improve reliability, and manage how artificial intelligence is used across the organization.

Source: Gartner AI Governance Forecast

When prompts are unmanaged, teams frequently recreate the same instructions, produce inconsistent results, and lose valuable knowledge that could otherwise improve productivity.

What begins as innovation quickly becomes confusion.

Research highlights how widespread this challenge has become.

According to McKinsey, nearly 70% of organizations are already experimenting with generative AI, yet many lack governance frameworks to manage how it is used across teams.

At the same time, Gartner predicts that by 2027, over 80% of enterprises will implement formal AI governance policies to control risk and improve reliability.

To use AI safely at work, organizations should avoid including sensitive information in prompts, use approved AI tools, document successful prompts, and store them in shared libraries so teams can collaborate and maintain consistency.

Key Takeaways

- Many enterprise AI initiatives struggle not because of the technology, but because prompts are unmanaged, undocumented, and scattered across teams.

- Prompt chaos leads to inconsistent AI outputs, duplicated work, and lost organizational knowledge as employees recreate prompts repeatedly.

- Uncontrolled prompting can introduce security and compliance risks when sensitive company data is included in AI prompts.

- Organizations need prompt governance practices such as centralized prompt libraries, documentation, version control, and clear ownership.

- Enterprises that treat prompts as structured operational assets will scale AI faster, reduce risk, and maintain consistent AI-driven workflows.

The Hidden Risk of Using AI at Work

As artificial intelligence becomes part of everyday work, many employees are using AI tools to write emails, summarize documents, generate reports, and analyze information.

While this can improve productivity, it also introduces new risks that many organizations are not fully prepared for.

One of the biggest problems is that employees often experiment with AI independently. Prompts are written in personal AI accounts, copied from Slack conversations, or shared informally between colleagues.

Over time, this creates a lack of visibility into how AI is being used across the organization.

Without clear policies, employees may unknowingly include sensitive information in prompts, such as customer data, internal documents, financial details, or confidential company strategies.

If those prompts are sent to external AI systems without proper safeguards, it can create potential data security and compliance risks.

Another issue is that prompts are rarely documented or shared properly.

Teams frequently recreate the same prompts, generate inconsistent outputs, and struggle to reproduce results that worked previously. What starts as experimentation can quickly turn into fragmented AI workflows that are difficult to manage or audit.

This challenge has become significant enough that many organizations are beginning to introduce AI governance frameworks to control how AI tools are used internally.

Some enterprise platforms, including systems like Glean have started addressing this issue by helping companies organize knowledge and improve visibility into how information and AI interactions are managed across workplace tools.

However, technology alone does not solve the problem. Organizations still need clear policies, documentation, and collaboration around prompts to ensure AI is used safely and consistently.

Without that structure, AI adoption can easily create confusion rather than efficiency.

70%

of organizations

According to McKinsey, nearly 70% of organizations are already experimenting with generative AI, highlighting how quickly AI is being adopted across industries and business functions.

Source: McKinsey Global Survey on AI

What Is Prompt Chaos in Enterprise AI?

Prompt chaos refers to a situation where employees across an organization use AI tools without any shared structure, documentation, or governance around how prompts are created and managed.

Instead of prompts being treated as reusable knowledge or operational assets, they are often written ad-hoc and scattered across different tools and conversations.

In many companies, prompts live everywhere — Slack messages, personal AI accounts, email threads, screenshots, internal documents, or temporary chat histories. Over time, this creates a fragmented environment where teams cannot easily find, reuse, or improve prompts that already work well.

Common signs of prompt chaos inside organizations include:

- Prompts being shared informally in chat tools like Slack or Teams

- Employees saving prompts in personal notes or private AI accounts

- Teams recreating prompts repeatedly instead of reusing proven ones

- No centralized library or documentation of successful prompts

- Different departments using completely different prompting approaches

- No ownership or governance around prompt creation and usage

Another challenge is the lack of documentation and version control.

When a prompt generates a useful result, it is rarely saved or documented properly.

The next time someone tries to perform a similar task, they often recreate the prompt from scratch. This leads to wasted time, inconsistent outputs, and repeated experimentation across teams.

Prompt chaos also grows when departments operate independently.

Marketing teams may use AI for content creation, HR teams may use it for drafting policies, while product teams use it for research or documentation.

Because these prompts are created separately, there are usually no shared standards, templates, or guidelines for how prompts should be written or used.

For example, a marketing team might develop a prompt that generates high-quality blog outlines, while a product team unknowingly builds a similar prompt for documentation summaries.

Without a centralized system to manage these prompts, the organization loses valuable knowledge and efficiency.

As enterprise AI adoption increases, many organizations are discovering that managing prompts effectively is becoming just as important as managing the AI models themselves.

Why Enterprise AI Projects Are Struggling to Scale

Many organizations have moved quickly to adopt generative AI tools, expecting immediate productivity gains.

However, once initial experimentation begins, companies often discover that scaling AI across departments is far more complex than simply giving employees access to AI tools.

The main challenge is not the AI technology itself — it's the lack of structure around how prompts and AI workflows are managed across teams.

When prompts are created randomly, stored in personal notes, or shared informally, organizations struggle to scale AI in a reliable and consistent way.

According to Deloitte, over 70% of AI initiatives stall before reaching full deployment, often due to governance, process, and operational challenges rather than technical limitations.

Several common issues contribute to this problem.

Prompts Are Treated as Disposable Instructions

In many workplaces, prompts are written once and then forgotten.

Employees use AI tools to complete a task, generate an answer, or produce content, but the prompt itself is rarely saved or documented.

This approach means valuable knowledge disappears after each use.

If a prompt produces a useful result, it should ideally be stored, refined, and reused. Instead, teams often repeat the same experimentation again and again.

Over time, this leads to:

- duplicated effort across teams

- inconsistent AI outputs

- lost productivity from repeated trial and error

Treating prompts as reusable operational assets rather than disposable instructions is essential for scaling enterprise AI effectively.

No Governance or Prompt Ownership

Another major issue is the absence of clear ownership and governance around AI usage. In many organizations, employees are free to experiment with AI tools without any guidelines for how prompts should be created, stored, or reviewed.

Without governance, companies face several risks:

- inconsistent AI-generated outputs

- potential exposure of sensitive company data

- lack of transparency in how AI decisions are generated

- difficulty auditing or reproducing AI results

This is why many enterprises are beginning to introduce AI governance frameworks, assigning responsibility for prompt management, documentation, and oversight.

Teams Recreate the Same Prompts Repeatedly

When prompts are not stored in shared systems or prompt libraries, employees often recreate them from scratch.

Different teams may unknowingly build nearly identical prompts to solve the same problem.

For example:

- marketing teams may create prompts for blog outlines or social media posts

- HR teams may write prompts for policy summaries or employee communications

- product teams may develop prompts for documentation or research analysis

Without a shared prompt repository, these efforts remain isolated.

The result is lost organizational knowledge, slower AI adoption, and unnecessary duplication of work.

Organizations that successfully scale AI usually introduce centralized prompt libraries and documentation systems so teams can collaborate and build on each other's work instead of starting over.

The Hidden Impact of Prompt Chaos in Enterprise AI

While prompt chaos may seem like a minor operational issue, its impact on organizations can be significant.

When prompts are unmanaged and scattered across different tools, teams lose consistency, visibility, and control over how AI is being used.

Over time, this creates operational risks that can slow AI adoption and reduce trust in AI-generated outputs.

Several hidden risks often emerge when prompt management is ignored.

Inconsistent AI Outputs

AI systems respond differently depending on how prompts are written.

Even small wording changes can produce completely different results. When employees across teams create prompts independently, organizations end up with inconsistent AI-generated outputs for the same tasks.

For example, two teams asking an AI system to summarize a report may receive different conclusions simply because the prompts were written differently.

This inconsistency can quickly reduce trust in AI systems.

According to Gartner, by 2026 over 30% of generative AI projects are expected to be abandoned after proof of concept, often due to poor output reliability and lack of operational governance.

Without standardized prompts or documentation, businesses struggle to produce predictable results.

Compliance and Security Risks

One of the biggest risks associated with uncontrolled prompting is data exposure.

Employees sometimes paste confidential information into prompts without realizing that external AI platforms may process or store that data.

This can include:

- customer information

- financial reports

- internal strategy documents

- proprietary code or intellectual property

According to IBM's Cost of a Data Breach Report, the average global cost of a data breach reached $4.45 million, highlighting how expensive data exposure can become.

Prompt governance helps prevent these risks by establishing rules for what information can safely be used with AI systems.

Lack of Reproducibility

Another hidden problem is that successful AI outputs often cannot be recreated.

When prompts are not documented or saved, employees may struggle to reproduce results that previously worked well.

For example:

- a prompt that produced a high-quality report summary

- an AI workflow that generated strong marketing content

- a prompt that helped analyze complex data quickly

If the original prompt is lost, the organization loses that knowledge as well.

Teams then repeat the same experimentation again, wasting time and reducing productivity.

AI Becomes Difficult to Audit

Organizations also need to understand how AI-generated outputs were created, especially in regulated industries or situations where decisions must be justified.

When prompts are scattered across personal accounts, chat tools, or temporary conversations, it becomes nearly impossible to track:

- who created the prompt

- what data was used

- which version of the prompt generated the output

- how the result was modified afterward

This lack of transparency creates challenges for compliance, governance, and internal accountability.

As enterprise AI becomes more integrated into daily workflows, companies are beginning to realize that prompt management is not just a productivity issue — it is a governance and risk management issue as well.

Why Prompt Governance Matters for Enterprise AI

As organizations begin integrating AI into daily workflows, prompts are quickly becoming a critical operational component.

In many ways, prompts function similarly to software code, business workflows, or internal documentation. They instruct AI systems on how to interpret questions, process information, and generate outputs.

However, unlike traditional software development where code is carefully documented, versioned, and reviewed, prompts are often created informally and used without oversight.

This lack of structure can lead to inconsistent outputs, duplicated work, and potential security risks.

To scale AI successfully, organizations must begin treating prompts as managed assets rather than disposable instructions.

A structured prompt governance framework typically includes several key elements.

- Prompt Documentation - Every useful prompt should be documented so teams understand what the prompt does, when it should be used, and what output it generates. Clear documentation prevents teams from recreating prompts repeatedly and helps new employees quickly adopt proven workflows.

- Version Control - Prompts evolve over time as teams refine them to produce better results. Version control allows organizations to track changes, compare prompt variations, and revert to earlier versions if needed. This approach is similar to how software code is managed in development environments.

- Centralized Prompt Libraries - Instead of storing prompts in personal notes or chat conversations, organizations benefit from maintaining shared prompt libraries. These repositories allow teams to discover existing prompts, reuse proven templates, and collaborate on improvements.

- Access Controls - Not every prompt should be available to everyone. Some prompts may involve sensitive workflows, internal data structures, or proprietary processes. Role-based access controls help ensure that prompts are shared appropriately within departments or teams.

- Audit Trails - Organizations need visibility into how AI is being used. Audit trails help track who created a prompt, when it was used, and what outputs were generated. This level of transparency becomes especially important in regulated industries or situations where AI-generated content influences business decisions.

- Prompt Testing and Validation - Just as software features are tested before deployment, prompts should also be validated. Testing helps ensure prompts produce accurate, reliable, and consistent outputs before they are widely adopted across the organization.

As AI adoption continues to grow, companies that introduce prompt governance early will be far better positioned to scale AI safely and effectively.

By treating prompts as structured assets—similar to code or workflows—organizations can reduce chaos, improve collaboration, and maintain greater control over how AI supports their operations.

Practical Steps to Fix Prompt Chaos in Your Organization

Prompt chaos does not usually happen intentionally. It develops gradually as employees begin experimenting with AI tools independently.

Over time, prompts become scattered across chats, documents, and personal AI accounts, making it difficult for organizations to maintain consistency or learn from what works.

The good news is that fixing prompt chaos does not require complex technology.

Most organizations can significantly improve AI adoption by introducing a few simple operational practices.

1. Create a Shared Prompt Library

One of the most effective solutions is to create a centralized prompt library where employees can store and access proven prompts.

This allows teams to reuse prompts that already produce strong results instead of constantly starting from scratch.

A prompt library can include:

- prompt templates for common tasks

- descriptions explaining how the prompt should be used

- example outputs showing expected results

This helps turn prompts into shared organizational knowledge rather than isolated experiments.

2. Establish Prompt Ownership

Organizations should assign clear ownership for important prompts.

When no one is responsible for maintaining prompts, they quickly become outdated or inconsistent.

Prompt owners can be responsible for:

- maintaining prompt quality

- updating prompts when workflows change

- documenting improvements and best practices

This ensures prompts evolve as teams learn how to use AI more effectively.

3. Document Successful Prompts

Whenever a prompt produces a particularly useful result, it should be documented and shared with the wider team.

This prevents valuable knowledge from being lost and allows others to replicate successful workflows.

Documentation can include:

- the full prompt

- the task it solves

- tips for improving the output

Over time, this creates a growing knowledge base of effective AI usage.

4. Introduce Prompt Review Processes

Just like code reviews in software development, prompts can benefit from review and testing before widespread use.

This helps identify unclear instructions, improve output quality, and ensure prompts do not introduce compliance risks.

Prompt reviews can check for:

- clarity of instructions

- consistent formatting

- potential data security concerns

- expected output accuracy

This improves trust in AI-generated results.

5. Train Teams on Prompt Design

Many employees use AI tools without understanding how prompts influence results. Providing basic prompt training can dramatically improve output quality.

Training can cover:

- writing clear and structured prompts

- providing context and constraints

- refining prompts through iteration

Even short training sessions can help teams get far more value from AI tools.

6. Track AI Output Quality

Organizations should also monitor how AI outputs are being used and whether they are producing reliable results.

Tracking output quality helps identify prompts that need improvement and ensures AI workflows remain trustworthy.

For example, teams can review:

- accuracy of AI-generated summaries

- quality of generated content

- usefulness of AI-assisted reports

By measuring results and refining prompts over time, companies can gradually build more reliable and scalable AI workflows.

The Future of Enterprise AI Depends on Prompt Management

As organizations continue investing in artificial intelligence, many assume success will come from choosing the most powerful models or the newest AI platforms.

In reality, long-term success will depend far more on how organizations manage the way AI is used across teams.

AI models are becoming increasingly accessible, and many companies now have access to similar technology. What separates successful AI initiatives from struggling ones is often operational discipline—how prompts are structured, shared, documented, and governed.

In the future, prompts will likely be treated as core operational assets within organizations.

Just as businesses manage software code, internal documentation, and business processes, prompts will require clear ownership, collaboration, and lifecycle management.

Organizations that take prompt management seriously will benefit in several ways:

- more consistent AI outputs across teams

- faster adoption of successful AI workflows

- improved transparency in how AI decisions are generated

- reduced risk related to security, compliance, and data exposure

Over time, prompt governance will become as essential as other enterprise governance practices. Companies will increasingly place prompts within broader frameworks that already exist for managing critical systems.

For example, prompt governance will begin to sit alongside:

- data governance, which controls how organizational data is stored and used

- security policies, which protect sensitive information and access to systems

- software development practices, which manage version control, testing, and deployment

The organizations that succeed with enterprise AI will not necessarily be those with the most advanced models. Instead, they will be the ones that build strong systems for managing how AI is used, improved, and trusted across the organization.

In other words, the future of enterprise AI will depend not just on technology, but on how well companies manage the prompts that guide it.

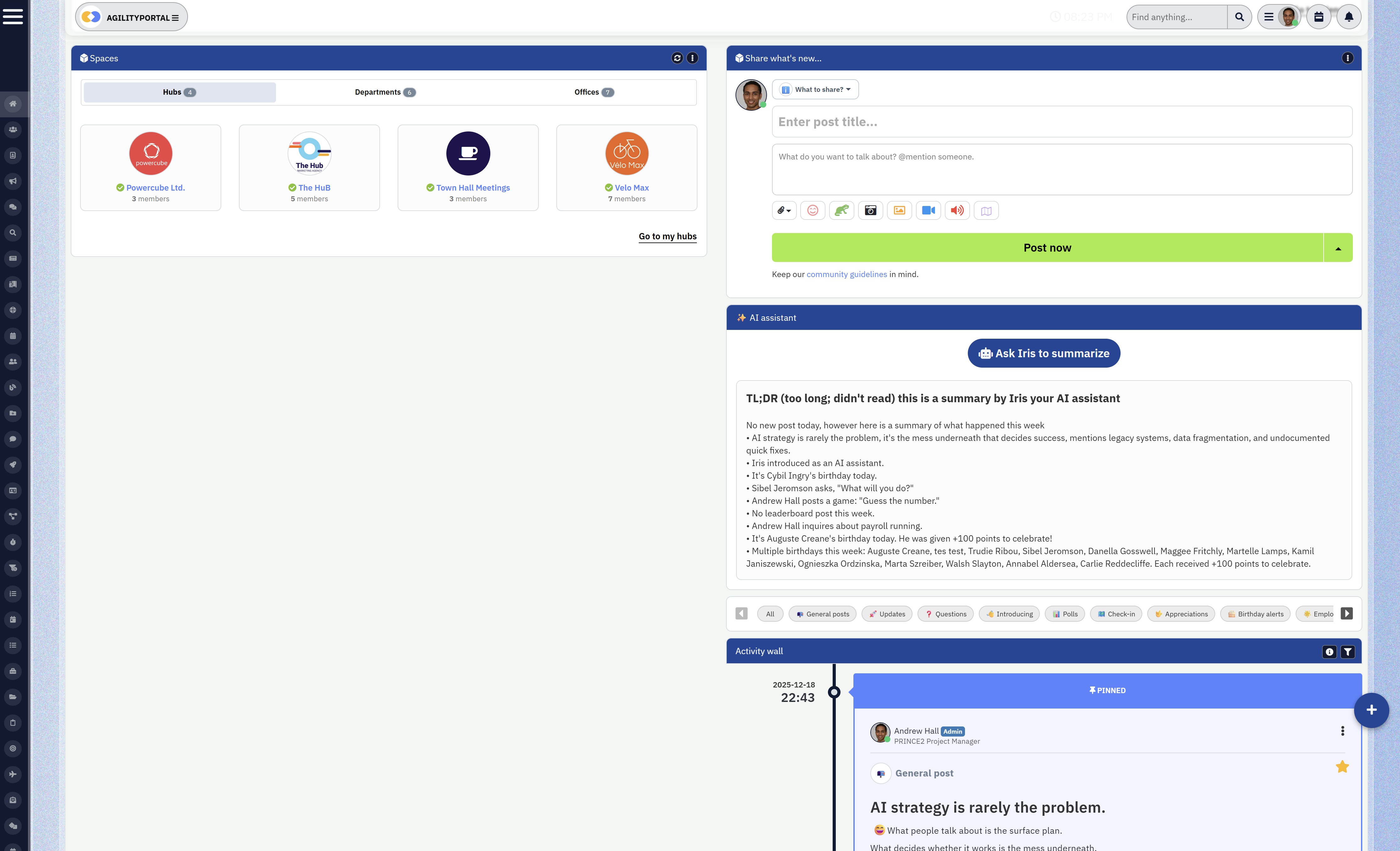

How Digital Workplace Platforms Can Help Manage AI Workflows

As organizations experiment with AI across different departments, one of the biggest challenges is keeping knowledge organized and accessible.

When prompts, AI experiments, and generated outputs are scattered across chat tools, emails, and personal documents, teams quickly lose visibility into what is working and what is not.

This is where digital workplace platforms play an important role.

By providing a centralized environment for collaboration, documentation, and knowledge management, these platforms help organizations bring structure to how AI is used across teams.

Instead of employees working in isolation, digital workplace systems allow organizations to capture and share AI knowledge more effectively.

For example, teams can use centralized platforms to support:

- Knowledge sharing across departments so employees can learn from successful AI use cases

- AI prompt documentation, allowing teams to store and reuse proven prompts

- Collaboration around AI experiments, where teams refine prompts and workflows together

- Workflow approvals, ensuring AI-generated content is reviewed before being published or distributed

- Governance controls, helping organizations track how AI tools are being used internally

- Secure knowledge bases, where prompts, documentation, and AI guidelines can be stored safely

When these capabilities are combined, organizations can begin to treat AI workflows as structured processes rather than informal experiments.

Centralized collaboration platforms also help reduce duplication of effort.

Instead of multiple teams creating similar prompts independently, employees can discover existing prompts and build on previous work.

This speeds up learning, improves consistency, and allows organizations to scale AI adoption more confidently.

As enterprise AI becomes more integrated into daily work, companies will increasingly need structured environments where AI knowledge, prompts, and workflows can be documented, shared, and governed.

Digital workplace platforms provide the foundation that makes this possible.

Conclusion

Enterprise AI adoption is accelerating, but many organizations underestimate how difficult it is to manage AI at scale.

While companies focus heavily on choosing the right model or platform, the real challenge often lies in how prompts are created, shared, and maintained across teams.

Without governance, documentation, and collaboration, prompt chaos quickly emerges — leading to inconsistent results, lost knowledge, and stalled AI initiatives.

Organizations that treat prompts as structured assets, rather than disposable instructions, will be far better positioned to scale AI successfully.

AI Summary

- Many enterprise AI projects struggle not because of the technology itself, but because organizations lack structure around how prompts are created, shared, and managed.

- Prompt chaos occurs when employees experiment with AI independently, storing prompts in chats, documents, or personal AI tools without documentation or governance.

- This lack of prompt management leads to inconsistent AI outputs, duplicated work across teams, and loss of valuable organizational knowledge.

- Uncontrolled prompting can also introduce security and compliance risks when sensitive company information is included in prompts sent to external AI platforms.

- Organizations that implement prompt governance practices—such as prompt libraries, documentation, version control, and clear ownership—can scale AI more safely and effectively.

- As enterprise AI adoption grows, managing prompts will become a critical part of AI governance, helping organizations maintain consistency, transparency, and trust in AI-driven workflows.

Categories

Blog

(2946)

Business Management

(366)

Employee Engagement

(222)

Digital Transformation

(190)

Growth

(142)

Intranets

(134)

Remote Work

(63)

Sales

(53)

Collaboration

(47)

Customer Experience

(30)

Culture

(29)

Knowledge Management

(28)

Project management

(28)

Leadership

(20)

Comparisons

(9)

News

(1)

Ready to learn more? 👍

One platform to optimize, manage and track all of your teams. Your new digital workplace is a click away. 🚀

Free for 14 days, no credit card required.

![Best Free LMS Platforms for Employee Training + Online Learning in 2026 [Expert Picks] Best Free LMS Platforms for Employee Training + Online Learning in 2026 [Expert Picks]](http://agilityportal.io/images/easyblog_articles/1756/b2ap3_thumbnail_Best-Free-LMS-Platforms-for-Employee-Training--Online-Learning-in-2026-.jpg)